1. GIT - Hugging Face

GitVisionConfig · GitVisionModel · GitConfig · GitProcessor

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

2. Installation - Hugging Face

git clone https://github.com/huggingface/transformers.git cd transformers pip install -e . These commands will link the folder you cloned the repository to ...

We’re on a journey to advance and democratize artificial intelligence through open source and open science.

3. GIT: A Generative Image-to-text Transformer for Vision and Language

27 mei 2022 · Abstract:In this paper, we design and train a Generative Image-to-text Transformer, GIT, to unify vision-language tasks such as image/video ...

In this paper, we design and train a Generative Image-to-text Transformer, GIT, to unify vision-language tasks such as image/video captioning and question answering. While generative models provide a consistent network architecture between pre-training and fine-tuning, existing work typically contains complex structures (uni/multi-modal encoder/decoder) and depends on external modules such as object detectors/taggers and optical character recognition (OCR). In GIT, we simplify the architecture as one image encoder and one text decoder under a single language modeling task. We also scale up the pre-training data and the model size to boost the model performance. Without bells and whistles, our GIT establishes new state of the arts on 12 challenging benchmarks with a large margin. For instance, our model surpasses the human performance for the first time on TextCaps (138.2 vs. 125.5 in CIDEr). Furthermore, we present a new scheme of generation-based image classification and scene text recognition, achieving decent performance on standard benchmarks. Codes are released at \url{https://github.com/microsoft/GenerativeImage2Text}.

4. [2403.09394] GiT: Towards Generalist Vision Transformer through ... - arXiv

14 mrt 2024 · Abstract:This paper proposes a simple, yet effective framework, called GiT, simultaneously applicable for various vision tasks only with a ...

This paper proposes a simple, yet effective framework, called GiT, simultaneously applicable for various vision tasks only with a vanilla ViT. Motivated by the universality of the Multi-layer Transformer architecture (e.g, GPT) widely used in large language models (LLMs), we seek to broaden its scope to serve as a powerful vision foundation model (VFM). However, unlike language modeling, visual tasks typically require specific modules, such as bounding box heads for detection and pixel decoders for segmentation, greatly hindering the application of powerful multi-layer transformers in the vision domain. To solve this, we design a universal language interface that empowers the successful auto-regressive decoding to adeptly unify various visual tasks, from image-level understanding (e.g., captioning), over sparse perception (e.g., detection), to dense prediction (e.g., segmentation). Based on the above designs, the entire model is composed solely of a ViT, without any specific additions, offering a remarkable architectural simplification. GiT is a multi-task visual model, jointly trained across five representative benchmarks without task-specific fine-tuning. Interestingly, our GiT builds a new benchmark in generalist performance, and fosters mutual enhancement across tasks, leading to significant improvements compared to isolated training. This reflects a similar impact observed in LLMs. Further enriching training with 27 datasets, GiT achieves strong zero-shot results over va...

![[2403.09394] GiT: Towards Generalist Vision Transformer through ... - arXiv](http://fakehost/static/browse/0.3.4/images/arxiv-logo-fb.png)

5. huggingworld / transformers - GitLab

30 jun 2020 · Transformers: State-of-the-art Natural Language Processing for Pytorch and TensorFlow 2.0.

🤗Transformers: State-of-the-art Natural Language Processing for Pytorch and TensorFlow 2.0.

6. GIT: A Generative Image-to-text Transformer for Vision and Language

27 mei 2022 · In this paper, we design and train a Generative Image-to-text Transformer, GIT, to unify vision-language tasks such as image/video ...

🏆 SOTA for Image Captioning on nocaps-XD near-domain (CIDEr metric)

7. [PDF] Gas Insulated Transformer(GIT) - Mitsubishi Electric

Gas Insulated Transformer(GIT). IEC-60076 part 15 gas-filled power transformers enacted in 2008. Non-flammable and non-explosive. Non-Flammable and Non ...

![[PDF] Gas Insulated Transformer(GIT) - Mitsubishi Electric](http://www.mitsubishielectric.com/m/common/img/fb_logo_mitsubishi.gif)

8. BERTopic - Maarten Grootendorst

Transformers · 6B. LLM & Generative AI · The Algorithm · 6A. Representation Models

Leveraging BERT and a class-based TF-IDF to create easily interpretable topics.

9. GIT: A Generative Image-to-text Transformer for Vision and Language

In this paper, we design and train a Generative Image-to-text Transformer, GIT, to unify vision-language tasks such as image/video captioning and question ...

In this paper, we design and train a Generative Image-to-text Transformer, GIT, to unify vision-language tasks such as image/video captioning and question answering. While generative models provide...

See AlsoiPhone - Buying iPhone

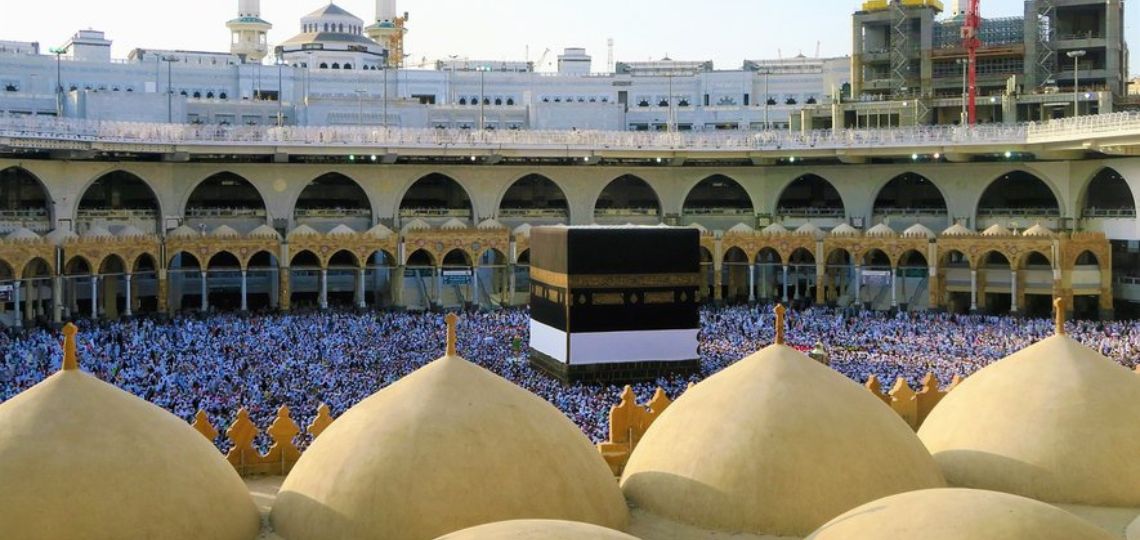

10. 7 Toshiba gas insulated transformers enter operation in Makkah

27 jun 2024 · Toshiba Energy Systems and Solutions Corporation has installed and activated seven gas insulated transformers (GIT) in the Haram 2 and Haram ...

Toshiba Energy Systems and Solutions Corporation has installed and activated seven gas insulated transformers (GIT) in the Haram 2 and Haram 3 substations serving Makkah, Saudi Arabia.

11. The Illustrated Transformer - Jay Alammar

27 jun 2018 · The Illustrated Transformer [Blog post]. Retrieved from https://jalammar.github.io/illustrated-transformer/ Note: If you translate any of ...

Discussions: Hacker News (65 points, 4 comments), Reddit r/MachineLearning (29 points, 3 comments) Translations: Arabic, Chinese (Simplified) 1, Chinese (Simplified) 2, French 1, French 2, Italian, Japanese, Korean, Persian, Russian, Spanish 1, Spanish 2, Vietnamese Watch: MIT’s Deep Learning State of the Art lecture referencing this post Featured in courses at Stanford, Harvard, MIT, Princeton, CMU and others In the previous post, we looked at Attention – a ubiquitous method in modern deep learning models. Attention is a concept that helped improve the performance of neural machine translation applications. In this post, we will look at The Transformer – a model that uses attention to boost the speed with which these models can be trained. The Transformer outperforms the Google Neural Machine Translation model in specific tasks. The biggest benefit, however, comes from how The Transformer lends itself to parallelization. It is in fact Google Cloud’s recommendation to use The Transformer as a reference model to use their Cloud TPU offering. So let’s try to break the model apart and look at how it functions. The Transformer was proposed in the paper Attention is All You Need. A TensorFlow implementation of it is available as a part of the Tensor2Tensor package. Harvard’s NLP group created a guide annotating the paper with PyTorch implementation. In this post, we will attempt to oversimplify things a bit and introduce the concepts one by one to hopefully make it easier to...

12. SentenceTransformers Documentation — Sentence ...

SentenceTransformers Documentation; Edit on GitHub. Note. Sentence Transformers v3.0 just released, introducing a new training API for Sentence Transformer ...

Sentence Transformers

13. Installation — Transformer Engine 1.7.0 documentation - NVIDIA Docs

Execute the following command to install the latest stable version of Transformer Engine: pip install git+https://github.com/NVIDIA/TransformerEngine.git@stable.

Linux x86_64

14. PRESSR: Seven Toshiba gas insulated transformers enter ...

1 dag geleden · ... gas insulated transformers (GIT) in the Haram 2 and Haram 3 substations serving Makkah, Saudi Arabia, significantly contributing to…

First published: 05-Jul-2024 10:24:40KAWASAKI, Japan--(BUSINESS WIRE)-- Toshiba Energy Systems and Solutions Corporation (“Toshiba”) has installed and activated seven gas insulated transformers (GIT) in the Haram 2 and Haram 3 substations serving Makkah, Saudi Arabia, significantly contributing to…

15. Simple Transformers

Simple Transformers. Using Transformer models has never been simpler! Built ... GitHub · Feed. © 2024 Thilina Rajapakse. Powered by Jekyll & Minimal Mistakes.

Using Transformer models has never been simpler! Built-in support for: Text Classification Token Classification Question Answering Language Modeling Language Generation Multi-Modal Classification Conversational AI Text Representation Generation

16. Install spaCy · spaCy Usage Documentation

... transformers] (with multiple comma-separated extras). See the [options ... git clone https://github.com/explosion/spaCy cd spaCy make. You can configure ...

spaCy is a free open-source library for Natural Language Processing in Python. It features NER, POS tagging, dependency parsing, word vectors and more.

17. How to Incorporate Tabular Data with HuggingFace Transformers - Medium

23 okt 2020 · [Colab] [Github]. By Ken Gu. Transformer-based models are a game-changer when it comes to using unstructured text data. As of September 2020 ...

[Colab] [Github]

18. Toshiba inaugurates gas-insulated transformers in Makkah - energynews

4 dagen geleden · The recent installation of seven gas-insulated transformers (GIT) by Toshiba Energy Systems and Solutions Corporation marks an important ...

Toshiba installs seven gas-fired transformers in Makkah substations, boosting reliability of power supply.